Are you falling for the AI horsepower trap?

In today’s race to adopt enterprise AI, it’s easy to believe that the latest, most powerful model is always the smartest investment. After all, who wouldn’t want the “best” technology powering their business? But what if that instinct, choosing the biggest, most advanced model, could quietly turn a promising project into a financial misstep?

The reality is that the most capable AI models often come with hidden costs that scale rapidly, especially in high-volume scenarios. What looks like a minor difference in per-use pricing can, over thousands of transactions, quietly erode your project’s ROI or even tip it into the red.

This article exposes the AI horsepower trap and shows why the “best” model isn’t always the right one for your business. Drawing on a real-world case study, automating data extraction from hundreds of thousands of pages each month, we’ll break down the true costs, compare model options, and provide a practical framework to help you make smarter, more profitable AI decisions.

Understanding the New Currency of AI: Tokens

When it comes to modern AI, the old rules of software pricing no longer apply. Instead of paying for a license or a flat subscription, you pay for what you use, and the unit of measurement is the token.

What’s a token?

Think of a token as a tiny piece of data. For text, it might be a word or even part of a word. For images, it’s a chunk of visual information. Every time you send data to an AI model, it gets broken down into tokens, and you’re billed for each one processed.

For example, the phrase “AIM Consulting is the best there is…no doubt about that.” is 13 tokens on GPT-3.5 and GPT-4, but the same phrase is 14 tokens on GPT-4.0. The difference may seem trivial, but at enterprise scale, these small variations add up quickly.

Why does this matter?

Because every token costs money. And when you’re processing thousands (or millions) of documents, even a fraction of a cent per token can mean the difference between a profitable project and a financial drain.

In our case study, we estimated that each document averaged 6 pages, with each page translating to about 1,750 input tokens. The structured data we needed back added another 250 output tokens per document. Multiply that by 2,000 documents per day, and you’re looking at hundreds of millions of tokens each month.

The takeaway:

Understanding and accurately estimating your token usage isn’t just a technical detail, it’s a critical business lever. Before you commit to any AI solution, use tools like OpenAI’s Tokenizer or similar resources to model your expected consumption. This simple step can help you avoid costly surprises and make more informed, data-driven decisions about which AI model is best suited to your needs.

The Financial Chasm: Why Model Choice Matters

When it comes to enterprise AI, the difference between a profitable project and a financial sinkhole often comes down to one decision: Which model do you choose?

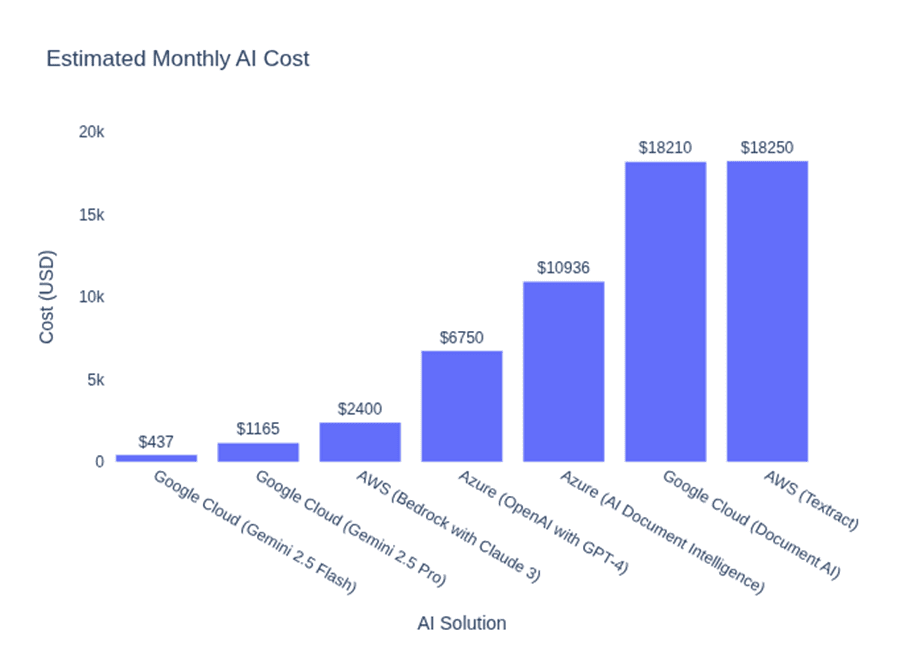

Our case study, automating data extraction from hundreds of thousands of documents each month, revealed just how dramatic the cost differences can be.

To identify the true costs and tradeoffs, we structured our evaluation into three main comparative analyses:

1. Specialized AI vs. Modern LLMs

Decision: Should you use a traditional, purpose-built AI service or a general-purpose large language model (LLM) for document data extraction?

Key Findings:

| Analysis Type | Model/Service | Key Features | Estimated Monthly Cost |

|---|---|---|---|

| Specialized AI | Azure AI Document Intelligence | Designed for structured tasks, often with robust accuracy and compliance features. | ~$10,936 |

| Flagship LLM | Google Gemini 2.5 Pro | Flexible, general-purpose, multimodal large language model. | ~$1,165 |

Insight:

Modern LLMs can deliver up to a 90% cost reduction for certain high-volume tasks compared to specialized services, without sacrificing quality for many use cases. Leaders should challenge default assumptions and rigorously evaluate whether a general-purpose model can meet their needs.

2. Flagship vs. Efficient LLMs

Decision: Within the LLM family, should you choose the most advanced model or a lighter, more efficient version?

Key Findings:

| Analysis Type | Model/Service | Key Features | Estimated Monthly Cost |

|---|---|---|---|

| Flagship LLM | Gemini 2.5 Pro | Superior reasoning and performance for complex tasks | ~$1,165 |

| Efficient LLM | Gemini 2.5 Flash | Optimized for speed and cost, still meets functional requirements for many business scenarios. | ~$437 |

Insight:

Choosing the “best” model can increase costs by over 160% with no added business value if your use case doesn’t require advanced capabilities. The right-sized model often delivers all the accuracy you need at a fraction of the price. Always match the model’s capabilities to your actual business requirements, not just technical benchmarks.

3. Managed APIs vs. Self-Hosted Open Source

Decision: Should you pay for a managed AI API, or try to save by hosting a “free” open-source model yourself?

Key Findings:

| Analysis Type | Model/Service | Key Features | Estimated Monthly Cost |

|---|---|---|---|

| Managed API | (e.g., Gemini 2.5 Pro API) | Pay-per-use, no infrastructure or maintenance overhead. | ~$1,165 |

| Self-Hosted Open Source LLM | Phi-3-vision | Requires 24/7 GPU infrastructure and dedicated MLOps personnel. | ~$5,430 |

Insight:

The “free” model is rarely free in practice. Hidden costs, especially for infrastructure and skilled personnel, can make self-hosting far more expensive and riskier than using a managed service. For most single-use enterprise applications, managed APIs are the safer, more cost-effective choice.

The Bottom Line for Smarter AI Model Selection

Each of these analyses underscores a central truth: the most powerful or hyped AI model is not always the best business decision. Leaders must take a data-driven approach, rigorously estimate real-world usage, and select the solution that aligns with both technical needs and financial realities.

The Final Check: Why a Proof of Concept is Non-Negotiable

Even the most thorough financial analysis is only a hypothesis until it’s tested in your real-world environment. That’s why a rigorous, data-driven Proof of Concept (PoC) is essential before scaling any AI solution.

What should your PoC validate?

- Accuracy: Does the chosen model, especially if it’s a more efficient, lower-cost option, meet your business’s minimum accuracy threshold? Test it on real data, not just benchmarks.

- Latency: Is the end-to-end processing time fast enough for your operational needs? Even a cost-effective model is a poor fit if it can’t keep up with your workflow.

- Cost: Are your token and usage estimates accurate? The PoC should reveal the true total cost of ownership (TCO) for your specific workload, including any hidden or variable expenses.

Why is this step critical?

A well-designed PoC isn’t just a technical demonstration, it’s a business validation process. It gives you the confidence that your AI solution will deliver the expected ROI, and it helps you avoid costly surprises that can arise from scaling the wrong model or architecture.

Bottom line:

Never skip the PoC. It’s your best defense against the AI horsepower trap and ensures your investment is grounded in reality, not just projections.

Conclusion & Next Steps

The allure of the “best” and most powerful AI models is undeniable, but as we’ve seen, that instinct can quietly undermine your project’s ROI. The real differentiator isn’t raw horsepower; it’s the discipline to match your AI solution to your business’s true needs, operational realities, and financial constraints.

Key takeaways:

- The most advanced model is rarely the most cost-effective for every use case.

- Small differences in per-token costs can add up to massive financial impacts at scale.

- The “free” open-source route often comes with hidden infrastructure and personnel costs.

- A rigorous, data-driven Proof of Concept is your best safeguard against costly surprises.

What should leaders do next?

- Challenge assumptions: Don’t default to the most powerful model. Demand a business case for every technology choice.

- Estimate and validate: Use real data to model your expected usage and costs before committing.

- Run a PoC: Test accuracy, latency, and total cost of ownership in your own environment.

- Partner wisely: Work with advisors who understand both the technical and financial dimensions of AI adoption.

By focusing on fit, not flash, you’ll avoid the AI horsepower trap and build a foundation for sustainable, profitable innovation.

Ready to make smarter, more profitable AI decisions?

Contact AIM Consulting today to discuss how we can help you align your AI investments with your business goals, and avoid the hidden costs of the AI horsepower trap.